Internal analytics

The internal analytics system provides the ability to track user behavior and system status for a GitLab instance to inform customer success services and further product development.

These doc pages provide guides and information on how to leverage internal analytics capabilities of GitLab when developing new features or instrumenting existing ones.

Fundamental concepts

Events and metrics are the foundation of the internal analytics system. Understanding the difference between the two concepts is vital to using the system.

Event

An event is a record of an action that happened within the GitLab instance. An example action would be a user interaction like visiting the issue page or hovering the mouse cursor over the top navigation search. Other actions can result from background system processing like scheduled pipeline succeeding or receiving API calls from 3rd party system. Not every action is tracked and thereby turned into a recorded event automatically. Instead, if an action helps draw out product insights and helps to make more educated business decisions, we can track an event when the action happens. The produced event record, at the minimum, holds information that the action occurred, but it can also contain additional details about the context that accompanied this action. An example of context can be information about who performed the action or the state of the system at the time of the action.

Metric

A single event record is not informative enough and might be caused by a coincidence. We need to look for sets of events sharing common traits to have a foundation for analysis. This is where metrics come into play. A metric is a calculation performed on pieces of information. For example, a single event documenting a paid user visiting the feature’s page after a new feature was released tells us nothing about the success of this new feature. However, if we count the number of page view events happening in the week before the new feature release and then compare it with the number of events for the week following the feature release, we can derive insights about the increase in interest due to the release of the new feature.

This process leads to what we call a metric. An event-based metric counts the number of times an event occurred overall or in a specified time frame. The same event can be used across different metrics and a metric can count either one or multiple events. The count can but does not have to be based on a uniqueness criterion, such as only counting distinct users who performed an event.

Metrics do not have to be based on events. Metrics can also be observations about the state of a GitLab instance itself, such as the value of a setting or the count of rows in a database table.

Instrumentation

- To create an instrumentation plan, use this template.

- To instrument an event-based metric, see the internal event tracking quick start guide.

- To instrument a metric that observes the GitLab instances state, see the metrics instrumentation.

Data discovery

Event and metrics data is ultimately stored in our Snowflake data warehouse. It can either be accessed directly via SQL in Snowflake for ad-hoc analyses or visualized in our data visualization tool Tableau, which has access to Snowflake. Both platforms need an access request (Snowflake, Tableau).

To track user interactions in the browser, Do-Not-Track (DNT) needs to be disabled. DNT is disabled by default for most browsers.

Tableau

Tableau is a data visualization platform and allows building dashboards and GUI based discovery of events and metrics. This method of discovery is most suited for users who are familiar with business intelligence tooling, basic verifications and for creating persisted, shareable dashboards and visualizations. Access to Tableau requires an access request.

Checking events

Visit the Snowplow event exploration dashboard. This dashboard shows you event counts as well as the most fired events. You can scroll down to the “Structured Events Firing in Production Last 30 Days” chart and filter for your specific event action. The filter only works with exact names.

Checking metrics

You can visit the Metrics exploration dashboard.

On the side there is a filter for metric path which is the key_path of your metric and a filter for the installation ID including guidance on how to filter for GitLab.com.

Custom charts and dashboards

Within Tableau, more advanced charts, such as this funnel analysis can be accomplished as well. Custom charts and dashboards can be requested from the Product Data Insights team by creating an issue in their project.

Snowflake

Snowflake allows direct querying of relevant tables in the warehouse within their UI with the Snowflake SQL dialect. This method of discovery is most suited to users who are familiar with SQL and for quick and flexible checks whether data is correctly propagated. Access to Snowflake requires an access request.

Querying events

The following example query returns the number of daily event occurrences for the feature_used event.

SELECT

behavior_date,

COUNT(*) as event_occurences

FROM prod.common_mart.mart_behavior_structured_event

WHERE event_action = 'feature_used'

AND behavior_date > '2023-08-01' --restricted minimum date for performance

AND app_id='gitlab' -- use gitlab for production events and gitlab-staging for events from staging

GROUP BY 1 ORDER BY 1 descFor a list of other metrics tables refer to the Data Models Cheat Sheet.

Querying metrics

The following example query returns all values reported for count_distinct_user_id_from_feature_used_7d within the last six months and the according instance_id:

SELECT

date_trunc('week', ping_created_at),

dim_instance_id,

metric_value

FROM prod.common.fct_ping_instance_metric_rolling_6_months --model limited to last 6 months for performance

WHERE metrics_path = 'counts.users_visiting_dashboard_weekly' --set to metric of interest

ORDER BY ping_created_at DESCFor a list of other metrics tables refer to the Data Models Cheat Sheet.

Product Analytics

Internal Analytics is dogfooding the GitLab Product Analytics functionality, which allows you to visualize events as well. The Analytics Dashboards documentation explains how to build custom visualizations and dashboards. The custom dashboards accessible within the GitLab project are defined in a separate repository. It is possible to build dashboards based on events instrumented via the Internal events system. Only events emitted by the .com installation will be counted in those visualizations.

The Product Analytics group’s dashboard can serve as inspiration on how to build charts based on individual events.

Data availability

For GitLab there is an essential difference in analytics setup between GitLab.com and GitLab Self-Managed or GitLab Dedicated instances.

Self-Managed and Dedicated

Starting with version 18.0, we collect event-level data on both Self-Managed and Dedicated instances, providing more detailed insights into product usage.

For GitLab 18.0 and later: Self-Managed and Dedicated instances collect event-level data, providing the same detailed insights available on GitLab.com.

For versions prior to 18.0: Only aggregated metrics are available. These metrics are computed once per week on a randomly chosen day and forwarded to Version App via a process called Service Ping. Only the metrics that were instrumented up to the version the instance is running are available. For example, if a metric is instrumented during the development of version 16.9, it will be available on instances running version 16.9 or later, but not on instances running earlier versions such as 16.8. The received payloads are imported into our Data Warehouse once per day.

GitLab.com

On our GitLab.com instance both individual events and pre-computed metrics are available for analysis. Additionally, page views are automatically instrumented.

Individual events & page views

Individual events and page views are forwarded directly to our collection infrastructure and from there into our data warehouse. However, at this stage the data is in a raw format that is difficult to query. For this reason the data is cleaned and propagated through the warehouse until it is available in the tables and diagrams pointed out in the data discovery section.

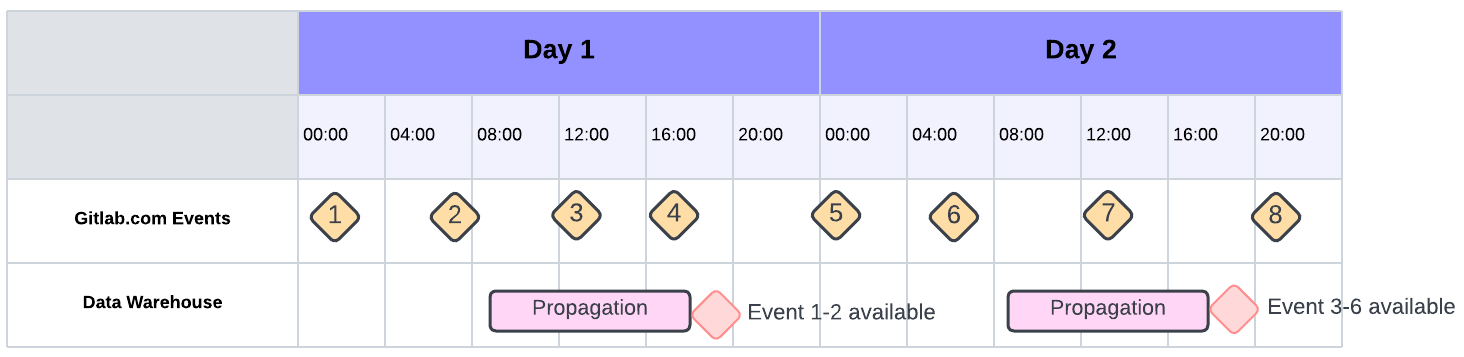

The propagation process takes multiple hours to complete. The following diagram illustrates the availability of events:

Pre-computed metrics

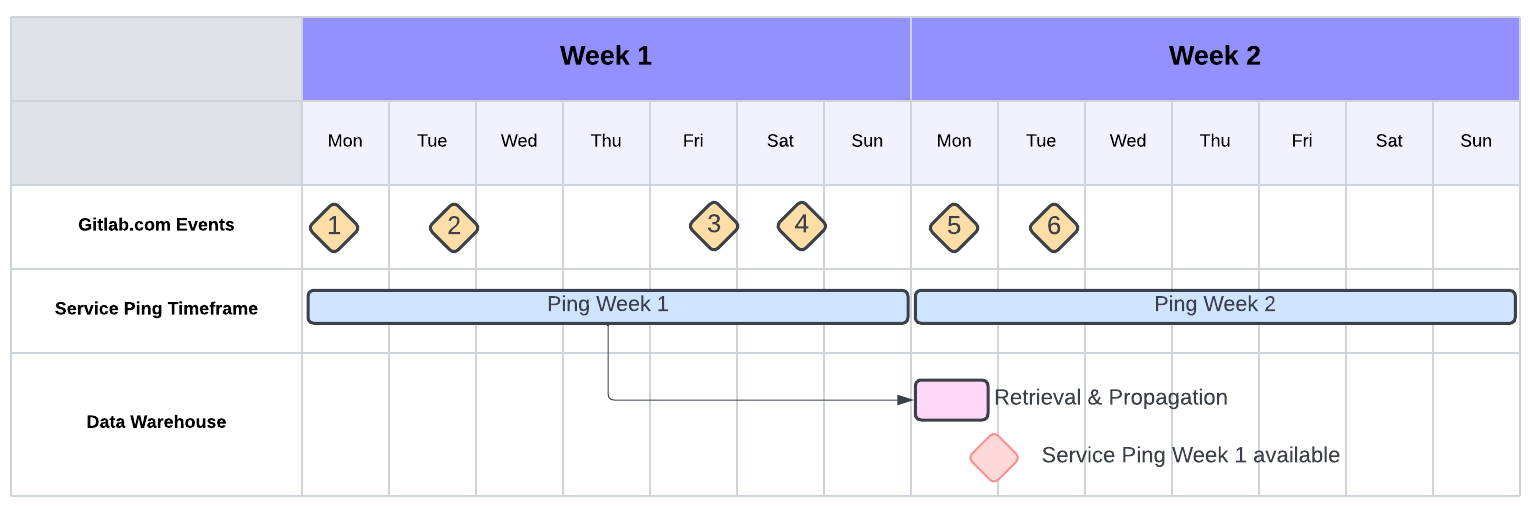

Metrics are computed once per week like on Self-Managed, with the only difference being that most of the computation takes place within the Warehouse rather than within the instance. For GitLab.com this process is started on Monday morning and computes metrics for the time-frame from Sunday 23:59 UTC and this Sunday 23:59 UTC.

The following diagram illustrates the process:

Data flow

On SaaS and Self-Managed instances (starting with GitLab 18.0), event records are directly sent to a collection system called Snowplow and imported into our data warehouse. For Self-Managed instances on versions prior to 18.0, only event counts are recorded locally. Every week, a process called Service Ping sends the current values for all pre-defined and active metrics to our data warehouse. For GitLab.com, metrics are calculated directly in the data warehouse.

The following chart aims to illustrate this data flow:

flowchart LR;

feature-->track

track-->|send event record - GitLab.com, Self-Managed 18.0+ and Dedicated 18.0+|snowplow

track-->|increase metric counts|redis

database-->service_ping

redis-->service_ping

service_ping-->|json with metric values - weekly export|snowflake

snowplow-->|event records - continuous import|snowflake

snowflake-->vis

subgraph glb[Gitlab Application]

feature[Feature Code]

subgraph events[Internal Analytics Code]

track[track_event / trackEvent]

redis[(Redis)]

database[(Database)]

service_ping[\Service Ping Process\]

end

end

snowplow[\Snowplow Pipeline\]

snowflake[(Snowflake Data Warehouse)]

vis[Dashboards in Tableau]

Data Privacy

For GitLab 18.0 and later: GitLab collects event-level data from Self-Managed instances with pseudonymized identifiers to protect privacy.

For versions prior to 18.0: GitLab only receives event counts or similarly aggregated information from Self-Managed instances. For the GitLab.com version, user identifiers for individual events are pseudonymized. An exact description on what kind of data is being collected through the Internal Analytics system is given in our handbook.